Edge AI Revolution: Real-Time Data Processing Redefined

Introduction

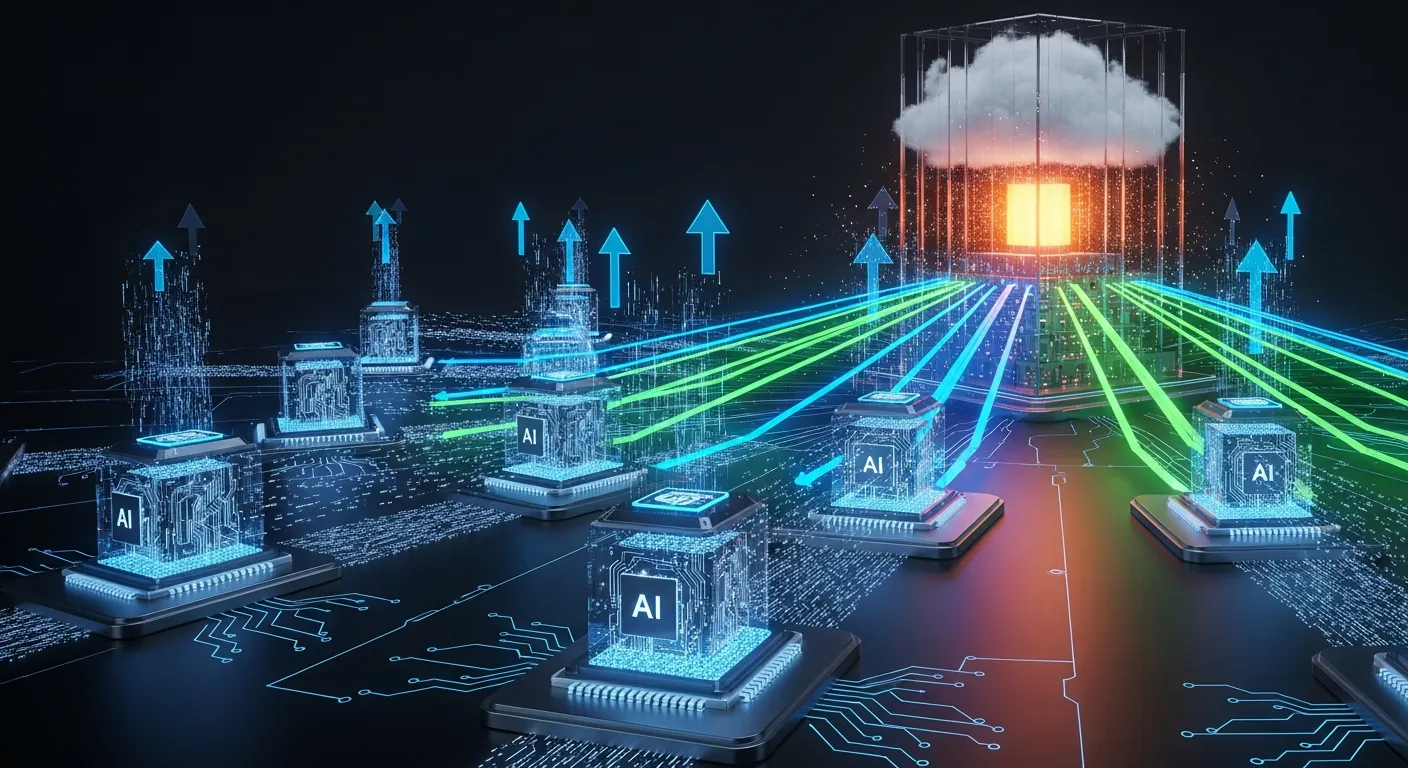

The rise of Edge AI is reshaping how data is processed by moving compute resources closer to the source of data generation. This shift reduces round‑trip times, lowers network load, and opens new possibilities for applications that demand immediate responses.

Core Concept

At its core, Edge AI combines artificial intelligence models with edge computing hardware to perform inference directly on devices such as sensors, gateways, or micro‑data centers. By eliminating the need to send raw data to distant clouds, Edge AI enables real‑time insights and actions.

Architecture Overview

A typical Edge AI architecture consists of a layered stack where sensors collect raw signals, a lightweight edge processor runs optimized models, and a communication layer synchronizes results with central services for long‑term analytics and model updates.

Key Components

- Edge Processor

- Sensor Fusion Module

- Model Optimizer

- Communication Interface

How It Works

Data captured by sensors is pre‑processed and fed into a compressed neural network that has been pruned and quantized for the target hardware. The edge processor executes the model, produces a prediction, and triggers local actuators or streams concise results to the cloud for further aggregation.

Use Cases

- Predictive maintenance in manufacturing

- Real-time traffic management

- Smart video analytics

- Autonomous drone navigation

Advantages

- Latency reduced to milliseconds, enabling split‑second decisions

- Bandwidth savings because only inference results are transmitted

Limitations

- Limited compute and memory resources restrict model size

- Power constraints on remote devices can limit continuous operation

Comparison

Compared with cloud‑only AI, Edge AI offers faster response times and lower data transfer costs, while traditional on‑premise servers provide higher raw compute power but cannot match the proximity advantage of edge deployment.

Performance Considerations

Performance depends on model optimization techniques, hardware acceleration (GPU, TPU, or ASIC), and efficient data pipelines. Balancing accuracy with inference speed is critical, and profiling tools help identify bottlenecks on the edge device.

Security Considerations

Edge AI expands the attack surface, requiring secure boot, encrypted model storage, and runtime integrity checks. Data privacy is enhanced because raw data often never leaves the device, yet secure communication channels remain essential for updates and telemetry.

Future Trends

By 2026 Edge AI will leverage federated learning to continuously improve models without central data collection, integrate neuromorphic chips for ultra‑low power inference, and adopt standardized AI model containers for seamless deployment across heterogeneous edge fleets.

Conclusion

Edge AI is a catalyst for real‑time data processing, delivering low latency, bandwidth efficiency, and localized intelligence. As hardware advances and software ecosystems mature, the impact of Edge AI will extend to virtually every industry that relies on instantaneous decision making.