How Service Mesh Boosts Microservice Communication

Introduction

Microservices have transformed application design but they also introduce communication complexity. Service mesh offers a dedicated infrastructure layer that handles inter-service traffic, making connections reliable, observable, and secure without changing application code.

Core Concept

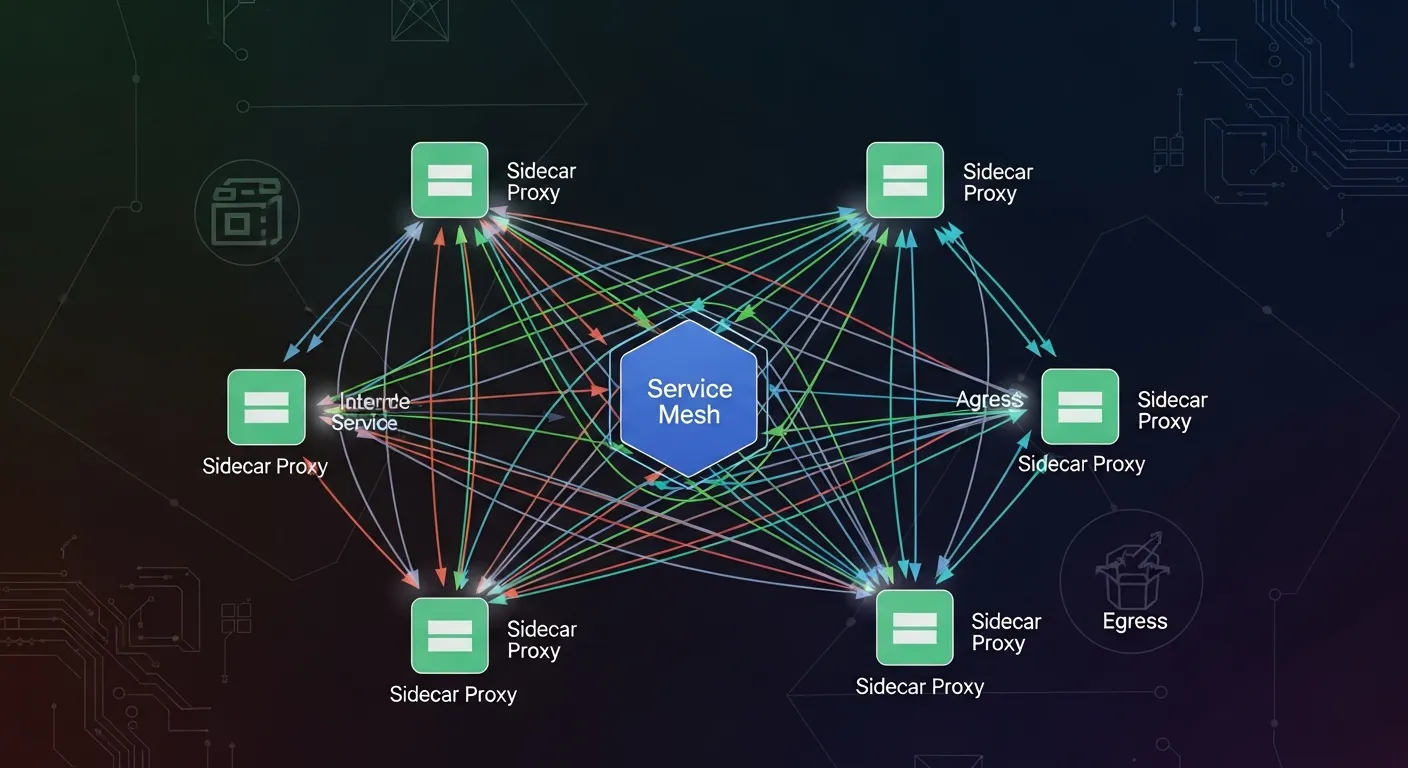

At its core a service mesh is a set of lightweight network proxies deployed alongside each service instance. These sidecar proxies intercept all inbound and outbound calls, allowing the mesh control plane to enforce policies and collect telemetry.

Architecture Overview

The mesh consists of a data plane made of sidecar proxies and a control plane that provides configuration, service discovery, and policy distribution. Proxies form a mesh network, communicating with each other via standard protocols such as HTTP/1.1, HTTP/2, gRPC or TCP.

Key Components

- Sidecar proxy

- Control plane

- Data plane

- Policy API

- Telemetry collection

How It Works

When a service sends a request, the call is routed to its local sidecar. The proxy consults the control plane for routing rules, applies retries, circuit breaking or mutual TLS, then forwards the request to the destination proxy. Responses follow the reverse path, enabling consistent observability and security across the entire call chain.

Use Cases

- Canary releases and blue-green deployments

- Automatic retries and timeout handling

- Zero-trust mutual TLS between services

- Distributed tracing and metrics aggregation

- Traffic shaping and load balancing

Advantages

- Uniform traffic management across all services

- Built-in observability without code changes

- Strong security with automatic mTLS

- Resilience patterns applied consistently

- Decouples networking concerns from business logic

Limitations

- Increased resource consumption due to sidecar proxies

- Operational complexity of managing control plane

- Steep learning curve for teams new to mesh concepts

- Potential latency overhead in high-throughput paths

- Debugging issues may require proxy-level insight

Comparison

Compared to traditional API gateways, a service mesh operates at the service-to-service layer, providing granular, per-call control rather than edge-only routing. Unlike custom libraries, the mesh centralizes policies, reducing duplication and version drift.

Performance Considerations

Sidecar proxies add CPU and memory overhead; sizing the mesh requires benchmarking typical request latency and throughput. Choosing a lightweight proxy, enabling proxy caching, and fine-tuning circuit-breaker thresholds can mitigate impact.

Security Considerations

The mesh automates mutual TLS certificate rotation, enforcing encryption for all traffic. Policy APIs let operators define least-privilege access, rate limits, and authentication rules, but misconfiguration can expose internal services.

Future Trends

By 2026 service meshes will integrate deeper with serverless platforms, support AI-driven traffic routing, and provide out-of-the-box compliance modules for zero-trust frameworks. Multi-cluster and multi-cloud meshes will become native, simplifying global service discovery.

Conclusion

A service mesh abstracts the networking layer, giving developers freedom to focus on business logic while operators gain control, visibility, and security. When deployed thoughtfully, it turns microservice communication from a challenge into a managed capability.