How Service Mesh Boosts Resilience in Cloud-Native Apps

Introduction

Cloud‑native applications are built from many loosely coupled services that must communicate reliably across dynamic environments. As these systems grow, ensuring uptime, handling failures, and maintaining performance become increasingly complex. A service mesh offers a dedicated infrastructure layer that abstracts these concerns, allowing developers to focus on business logic while the mesh manages resilience.

Core Concept

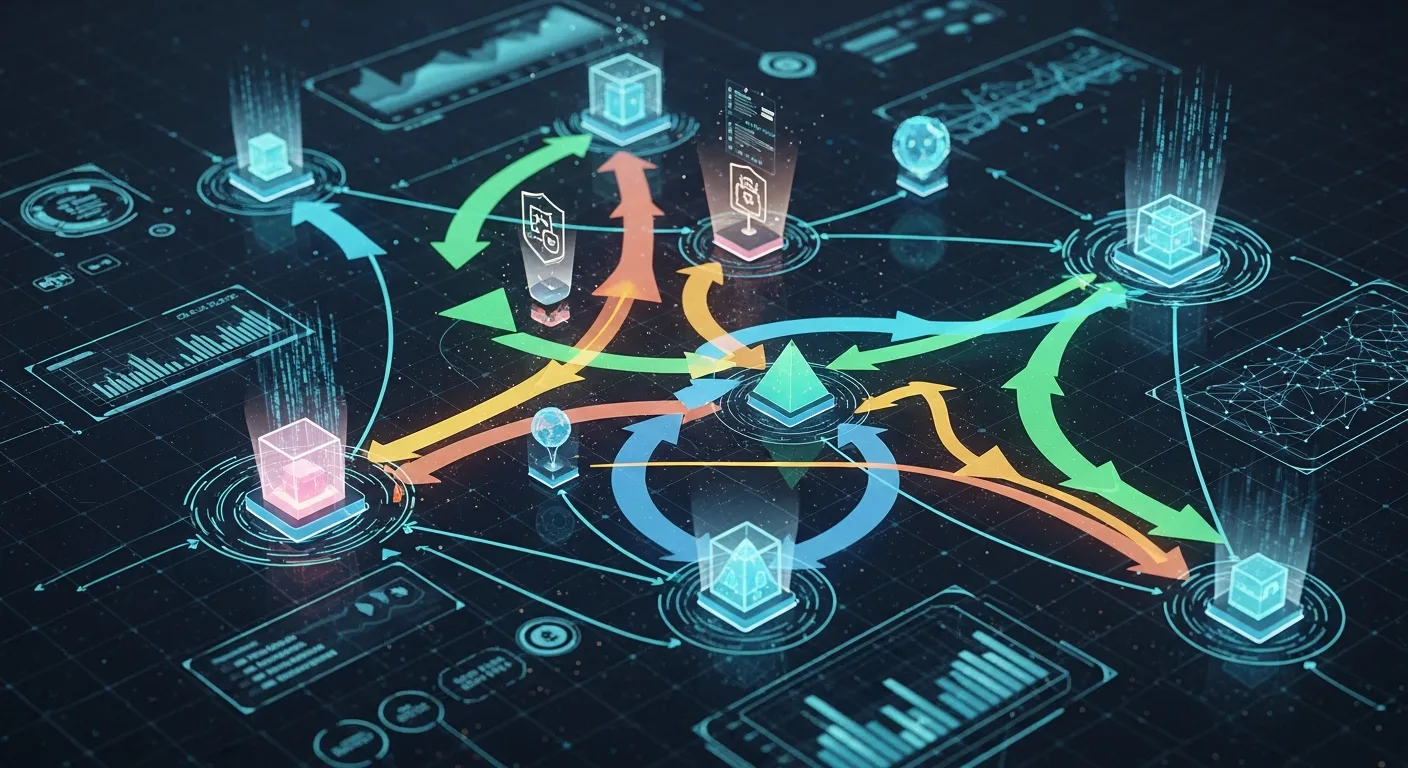

At its core, a service mesh is a network of lightweight proxies deployed alongside each service instance, orchestrated by a central control plane. The proxies intercept all inbound and outbound traffic, providing consistent policies for routing, retries, circuit breaking, and security without modifying application code.

Architecture Overview

The architecture separates responsibilities into two planes. The data plane consists of sidecar proxies that handle request forwarding, telemetry collection, and enforcement of policies. The control plane provides configuration, service discovery, and policy distribution to the proxies, often using APIs such as gRPC or REST. This separation enables rapid updates and global visibility across the mesh.

Key Components

- Sidecar Proxy

- Control Plane

- Telemetry System

- Policy Engine

How It Works

When a service call is made, the request first reaches the local sidecar proxy which consults the control plane for routing rules. If a downstream instance is unhealthy, the proxy can automatically retry on another instance, apply a timeout, or trigger a circuit breaker. All events are recorded and streamed to a telemetry backend, giving operators real‑time insight into latency, error rates, and traffic patterns.

Use Cases

- Automatic retries and exponential backoff for transient failures

- Canary releases and A/B testing with weighted routing

- Zero‑trust mutual TLS encryption between services

- Observability dashboards that aggregate mesh‑wide metrics

Advantages

- Uniform resilience patterns across all services

- Decouples operational concerns from business code

- Improves visibility through centralized metrics and tracing

- Enables rapid policy changes without redeploying applications

Limitations

- Increased resource consumption due to sidecar proxies

- Operational complexity of managing the control plane

- Potential latency overhead in high‑frequency request paths

- Learning curve for teams new to mesh concepts

Comparison

Compared with traditional API gateways, a service mesh operates at the service‑to‑service level rather than at the edge, providing fine‑grained control for internal traffic. Unlike ad‑hoc libraries embedded in code, the mesh enforces policies uniformly without code changes. However, for simple monolithic workloads a full mesh may be overkill, and a lightweight gateway can suffice.

Performance Considerations

Performance impact depends on proxy implementation, hardware resources, and traffic volume. Modern proxies such as Envoy use asynchronous I/O and can be tuned with CPU pinning and connection pooling. Operators should benchmark latency and throughput before and after mesh adoption, and consider sidecar resource limits to avoid contention.

Security Considerations

A mesh can enforce mutual TLS for all service communication, providing encryption and identity verification by default. Policy engines can restrict access based on service identities, reducing the attack surface. Nevertheless, securing the control plane itself is critical; it must be isolated, audited, and protected with strong authentication.

Future Trends

By 2026 service meshes are expected to integrate more tightly with serverless platforms, offering mesh capabilities for function‑as‑a‑service workloads. AI‑driven policy recommendation engines will suggest optimal retry and timeout settings based on real‑time telemetry. Edge‑native meshes will extend resilience patterns to distributed IoT environments, unifying cloud and edge observability.

Conclusion

A service mesh transforms resilience from an afterthought into a built‑in capability of cloud‑native architectures. By abstracting fault‑handling, traffic management, and security into a dedicated layer, teams gain consistency, observability, and agility. While the added complexity and resource cost must be weighed, the benefits of higher uptime and faster iteration make the mesh a compelling choice for modern microservice ecosystems.