How Service Mesh Boosts Security for Cloud-Native Apps

Introduction

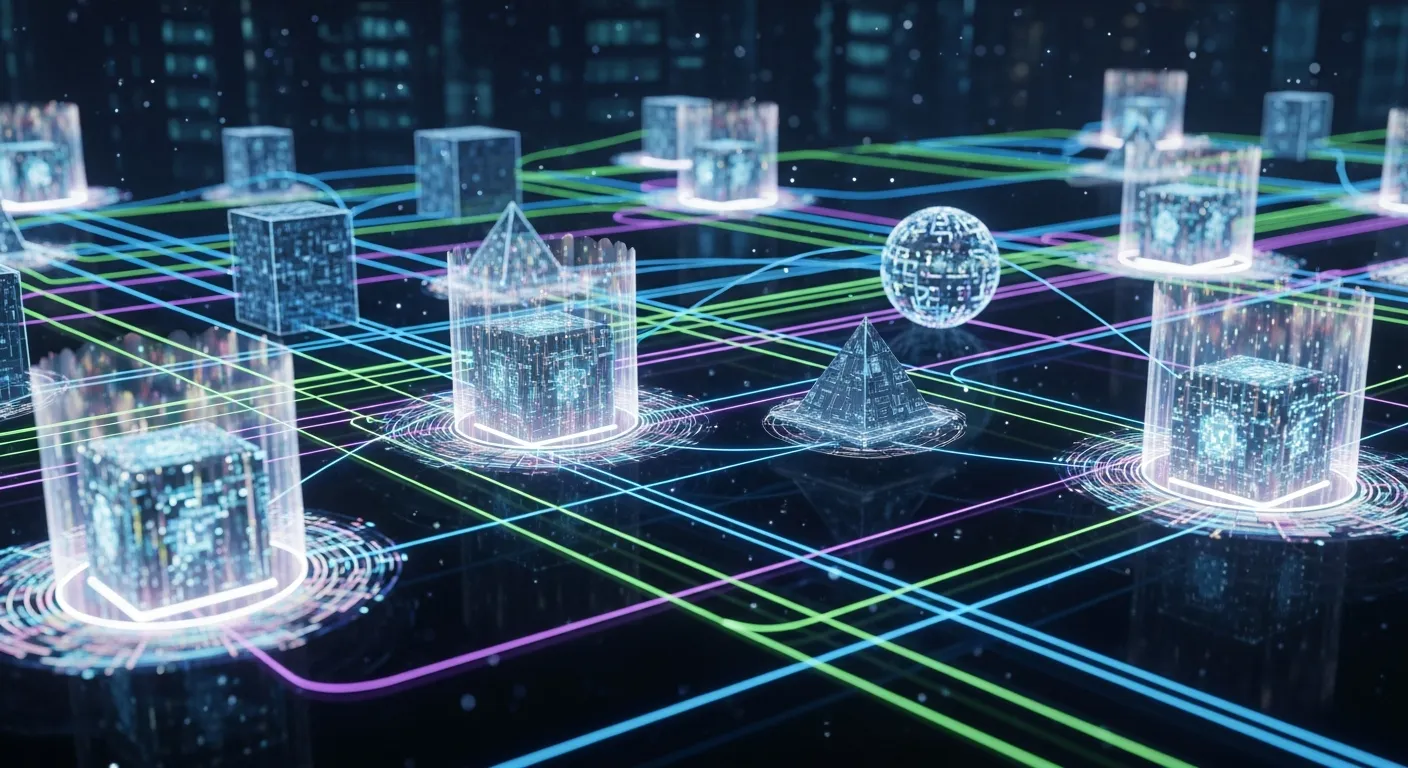

Microservice architectures have become the default for modern cloud‑native applications, but the proliferation of services creates a complex security landscape. Traditional perimeter defenses no longer protect internal east‑west traffic, and developers need a systematic way to enforce security policies without adding code to each service. This is where a service mesh steps in, providing a dedicated infrastructure layer that handles communication, observability and security across the entire application fabric.

Core Concept

A service mesh is an architectural pattern that deploys lightweight proxies alongside each service instance. These sidecar proxies intercept all inbound and outbound traffic, allowing the mesh to apply policies, collect telemetry and enforce encryption uniformly. By abstracting networking concerns from the application code, the mesh creates a programmable, language‑agnostic security perimeter that operates at the service level.

Architecture Overview

The mesh consists of two main planes. The control plane provides a centralized API for configuring policies, managing certificates and distributing configuration to the data plane. The data plane is made up of the sidecar proxies that run next to each service container or pod, handling request routing, mutual TLS termination and policy enforcement in real time. Together they form a closed loop that continuously reconciles desired state with actual traffic behavior.

Key Components

- control plane

- sidecar proxy

- policy engine

- certificate authority

- observability module

How It Works

When a service instance starts, the orchestrator injects a sidecar proxy into the same compute unit. All outgoing requests are sent to the local proxy, which looks up routing rules from the control plane and selects the destination proxy. Before forwarding, the proxy establishes a mutual TLS session using certificates issued by the mesh’s built‑in CA, guaranteeing encryption and identity verification. The policy engine evaluates each request against fine‑grained rules—such as source‑to‑destination, method, or payload size—and either permits, modifies or blocks the traffic. Telemetry data is streamed back to the control plane for monitoring and alerting.

Use Cases

- automatic end‑to‑end encryption for all inter‑service calls

- zero‑trust access control based on service identity

- secure ingress and egress gateways for external traffic

- rate limiting and circuit breaking to mitigate denial‑of‑service attacks

- audit logging of every request for compliance reporting

Advantages

- centralized security policy management reduces configuration drift

- mutual TLS provides strong authentication without code changes

- observability is built in, enabling rapid detection of anomalies

- language‑agnostic enforcement works for any runtime environment

- incremental adoption allows gradual migration from monoliths

Limitations

- added latency from proxy hop can affect latency‑sensitive workloads

- operational complexity increases with control plane management

- certificate rotation requires careful planning to avoid service disruption

- learning curve for developers and operators unfamiliar with mesh concepts

Comparison

Compared with traditional API gateways, a service mesh provides granular, per‑request security for internal traffic rather than just edge traffic. Host‑based firewalls operate at the network layer and cannot enforce application‑level policies such as method restrictions or payload inspection. The mesh fills this gap by operating at the application layer while still leveraging the performance of lightweight proxies.

Performance Considerations

Sidecar proxies introduce a small processing overhead, typically adding 1‑2 milliseconds of latency per hop. Optimizations such as connection pooling, HTTP/2 multiplexing and hardware acceleration can mitigate impact. Scaling the control plane horizontally and using locality‑aware routing reduce cross‑region traffic and keep latency predictable. Monitoring resource usage of proxies is essential to avoid CPU or memory bottlenecks in high‑throughput environments.

Security Considerations

Certificate lifecycle management is critical; automated rotation and revocation must be integrated with the mesh’s CA. The control plane itself becomes a high‑value target and should be hardened with multi‑factor authentication, network isolation and audit logging. Sidecar proxies expand the attack surface, so regular vulnerability scanning of proxy images is required. Finally, mesh policies should be versioned and tested in staging before production rollout to prevent accidental service disruption.

Future Trends

By 2026 service meshes are expected to evolve into full‑stack security platforms that incorporate AI‑driven anomaly detection, intent‑based policy generation and seamless integration with zero‑trust network architectures. Meshes will expose native APIs for serverless functions, edge devices and hybrid multi‑cloud environments, enabling consistent security posture across any compute surface. Open standards such as Service Mesh Interface (SMI) will drive interoperability, allowing organizations to swap implementations without rewriting policies.

Conclusion

A service mesh transforms security from an afterthought into a core infrastructure capability for cloud‑native applications. By providing automated mutual TLS, fine‑grained policy enforcement and built‑in observability, the mesh reduces the attack surface while preserving performance. While it adds operational complexity, the benefits of centralized control, compliance readiness and resilience make it a strategic investment for organizations seeking robust security in a microservice world.