Serverless Computing on OpenShift: Use Cases, Benefits and Limits

Introduction

Serverless computing has reshaped how developers build and run applications by abstracting infrastructure management. OpenShift, Red Hat's enterprise Kubernetes platform, now offers a robust serverless layer that blends the flexibility of containers with the simplicity of Function as a Service (FaaS). This article explains the core concepts, architecture, practical use cases, and the inherent limits you need to know before adopting serverless on OpenShift.

Core Concept

At its core, serverless on OpenShift decouples code execution from underlying servers. Developers push functions or small services, and the platform automatically scales them to zero when idle and to thousands of concurrent instances during spikes, charging only for actual compute time. OpenShift Serverless builds on Knative, extending it with enterprise‑grade features such as multi‑tenant isolation, integrated CI/CD pipelines, and policy enforcement.

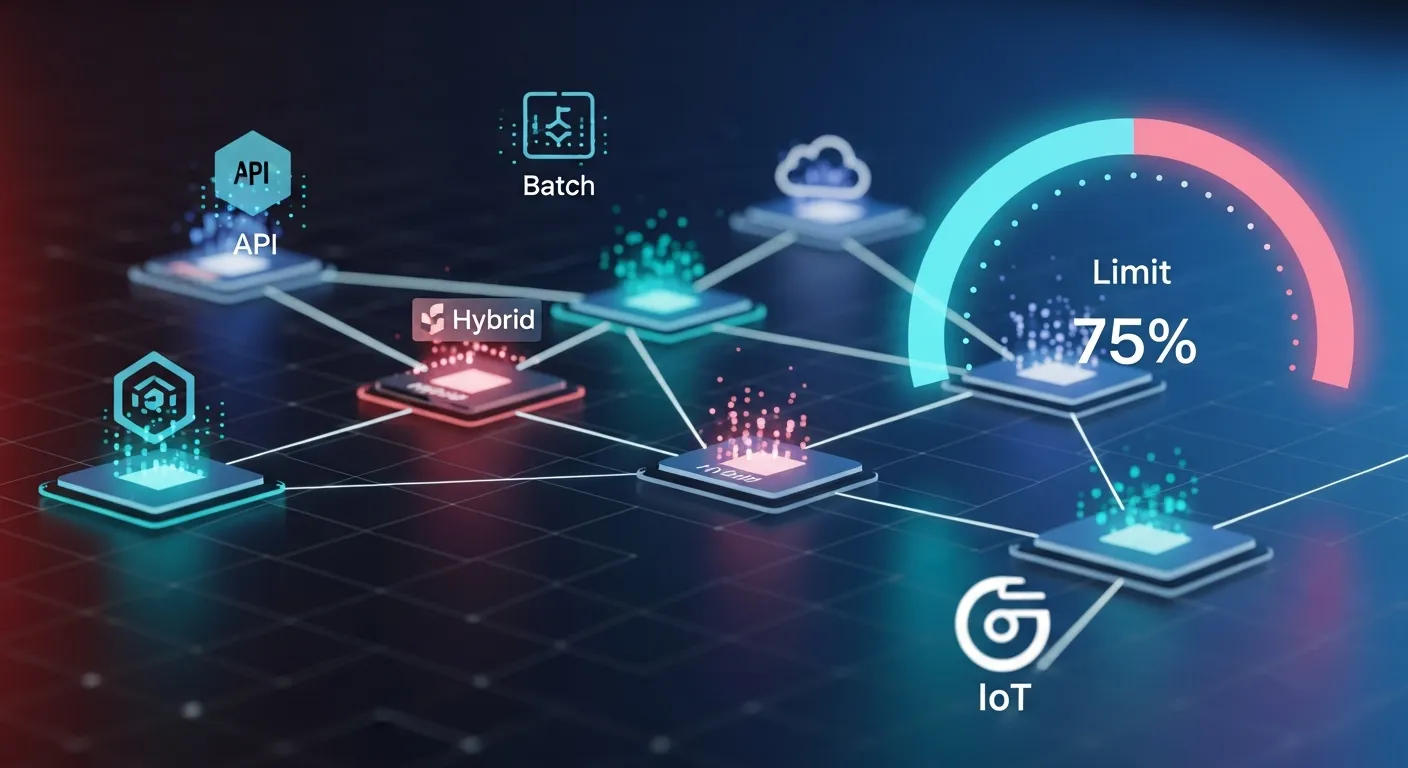

Architecture Overview

The architecture consists of three main layers: the control plane (Knative Serving and Eventing controllers), the data plane (Kourier or Istio ingress, autoscaling pods), and the OpenShift platform services (service mesh, monitoring, security). When a request arrives, the ingress routes it to the appropriate revision of a service. The autoscaler monitors concurrency and creates or removes pods dynamically, while the eventing subsystem enables asynchronous triggers from Kafka, HTTP, or cloud events.

Key Components

- Knative Serving

- Knative Eventing

- OpenShift Service Mesh

- Kourier or Istio ingress

- Horizontal Pod Autoscaler (HPA) with custom metrics

- OpenShift Monitoring (Prometheus, Grafana)

- OpenShift Security (SCC, RBAC)

How It Works

Developers package code as container images or source archives and submit them via the OpenShift console or CLI. The Serverless operator creates a Knative Service object, which defines a route, configuration, and revision history. The ingress receives traffic, the router selects the latest revision, and the autoscaler adjusts pod replicas based on request concurrency. Eventing sources listen for external events, translate them into CloudEvents, and invoke the appropriate service. All resources are managed as standard OpenShift objects, benefiting from the platform's governance and observability tools.

Use Cases

- Web request handling for variable traffic e‑commerce sites

- Data processing pipelines that react to object storage events

- Real‑time analytics functions triggered by Kafka streams

- API back‑ends for mobile applications with unpredictable usage patterns

- CI/CD job runners that scale to zero when not building

Advantages

- Automatic scaling to zero eliminates idle compute cost

- Unified developer experience with existing OpenShift tooling

- Enterprise security policies and multi‑tenant isolation built‑in

- Seamless integration with Kubernetes native services

- Fine‑grained metrics and logging via OpenShift monitoring stack

Limitations

- Cold start latency for infrequently used functions

- Maximum request timeout (default 60 seconds, configurable up to 300 seconds)

- Resource quotas can limit burst scaling in constrained clusters

- Complexity of tuning autoscaling parameters for high‑throughput workloads

- Limited support for languages that require heavy runtime initialization

Comparison

Compared with pure cloud FaaS platforms like AWS Lambda, OpenShift Serverless offers greater control over networking, compliance, and on‑premise deployment, but it requires managing the underlying cluster and may exhibit higher cold start latency. Compared with traditional Kubernetes deployments, it reduces operational overhead by automating scaling and routing, yet it abstracts less of the underlying infrastructure than fully managed serverless services.

Performance Considerations

To minimize cold starts, keep container images lightweight, pre‑warm critical functions, and use the 'scale to zero' timeout wisely. Leverage the concurrency‑based autoscaler instead of CPU‑based scaling for request‑driven workloads. Monitor pod startup times with OpenShift's latency dashboards and adjust the 'containerConcurrency' setting to balance parallelism and resource usage.

Security Considerations

OpenShift enforces Security Context Constraints (SCC) and Role‑Based Access Control (RBAC) for each function pod, ensuring isolation. Use image signing and scanning in the CI pipeline to prevent vulnerable code. Enable network policies to restrict outbound traffic from serverless pods, and audit eventing sources to prevent injection attacks via malformed CloudEvents.

Future Trends

By 2026 serverless on OpenShift is expected to integrate deeper with AI/ML inference workloads, offering GPU‑accelerated function pods and edge‑centric deployments via OpenShift's distributed architecture. Enhanced autoscaling algorithms using predictive analytics will further reduce latency, while tighter coupling with service mesh telemetry will provide real‑time cost optimization across hybrid cloud environments.

Conclusion

Serverless Computing on OpenShift brings the agility of functions to the reliability and security of an enterprise Kubernetes platform. While it introduces some operational considerations such as cold starts and scaling limits, the benefits of cost efficiency, developer productivity, and integrated observability make it a compelling choice for modern cloud‑native applications. Understanding the architecture, use cases, and constraints will help teams design resilient, scalable solutions that leverage the full power of OpenShift's serverless capabilities.