Why Observability Is Essential for Modern Distributed Systems

Introduction

In today’s cloud native world applications are split across dozens of services, containers and geographic regions, making failures hard to predict and diagnose. Observability equips engineers with the visibility needed to keep such complex systems healthy.

Core Concept

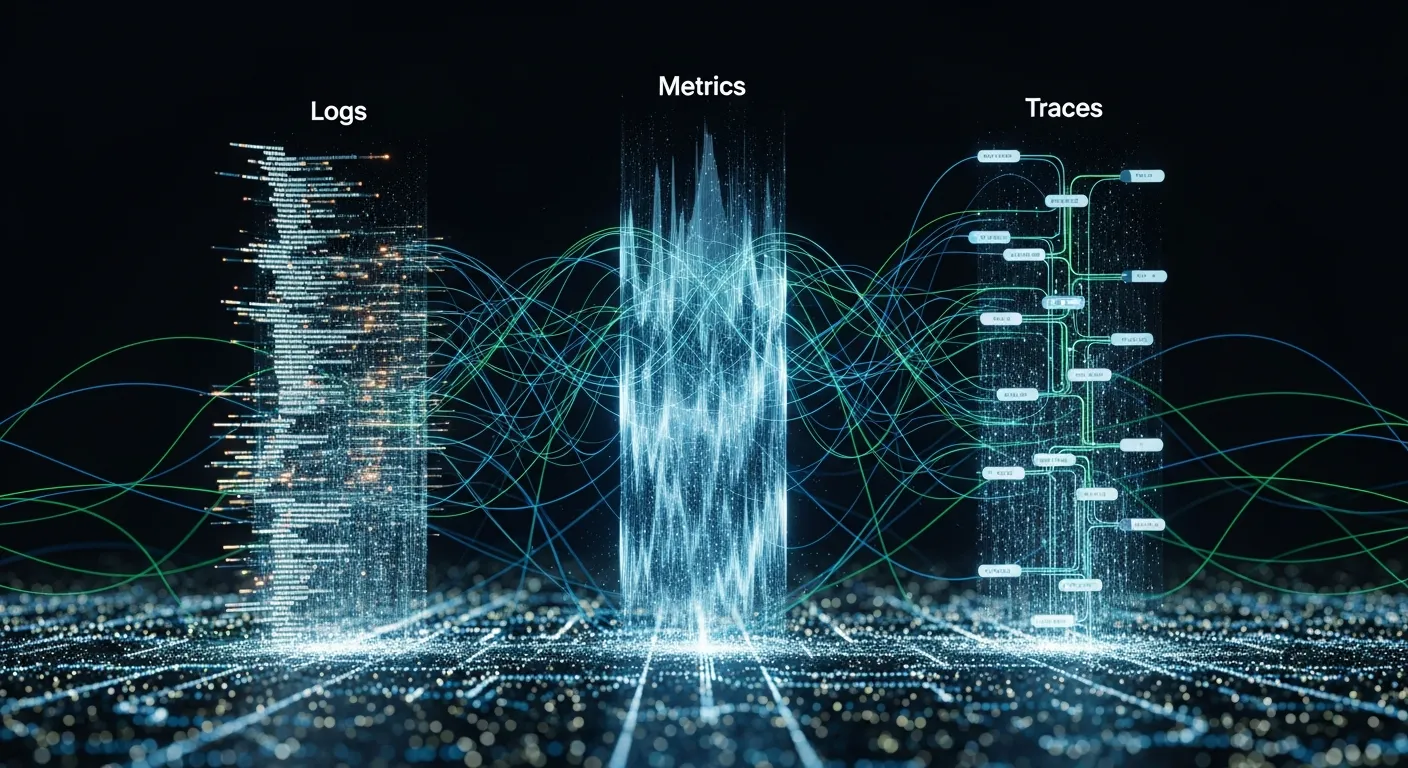

Observability is the ability to infer the internal state of a system from the data it produces, typically captured as metrics, traces and logs, allowing teams to understand behavior without invasive debugging.

Architecture Overview

A typical observability stack consists of agents embedded in each service, a data pipeline that enriches and transports signals, a storage layer optimized for time series, and visualization tools that surface insights to operators.

Key Components

- Metrics collection

- Distributed tracing

- Log aggregation

- Alerting and dashboards

- Service mesh telemetry

How It Works

Agents collect raw data, tag it with context such as service name and request ID, then forward it to a collector. The collector normalizes the payload, applies sampling or aggregation, and writes it to specialized back-ends. Query engines retrieve the data for dashboards or alert rules, while correlation engines stitch traces to logs for end-to-end request visibility.

Use Cases

- Detecting latency spikes in microservice calls

- Identifying memory leaks in containerized workloads

- Root cause analysis after a cascade failure

- Capacity planning for autoscaling clusters

Advantages

- Faster mean time to resolution

- Proactive anomaly detection

- Improved system reliability

- Data driven capacity decisions

- Enhanced stakeholder confidence

Limitations

- High data volume can increase storage cost

- Complex instrumentation may affect performance

- Signal-to-noise ratio requires skilled analysis

- Tool integration can be fragmented

Comparison

Observability differs from traditional monitoring by providing three pillars of metrics, traces and logs that together give a holistic view, whereas monitoring often relies on threshold alerts on isolated metrics.

Performance Considerations

Instrumentation should be lightweight, sampling rates must balance fidelity and overhead, and back-end storage should support high write throughput without impacting the production workload.

Security Considerations

Data collected may contain sensitive payloads, so encryption in transit and at rest is required, access controls must be enforced on dashboards, and retention policies should comply with privacy regulations.

Future Trends

By 2026 observability platforms will embed AI driven root cause suggestions, automatically correlate cross-cluster signals, support serverless edge environments and offer unified privacy-first data pipelines.

Conclusion

Without observability, distributed systems become black boxes where problems surface late and cost more to fix. Investing in a robust observability strategy turns complexity into actionable insight, driving reliability, performance and business value.